They'll need to check application and systems logs that I don't have access to and run other diagnostics as needed. Given our audit tables and logs, I can only verify so much until I need to confer with systems admins for our non cloud assets. Check job schedules to confirm things are running accordingly. See what ETL jobs in turn update those data sources. I'd go look at the data sources that provides information to that dashboard.

Work my way backwards through the pipeline until point of failure is identified. Frankly this doesn't happen at a lot of workplaces but you should keep your own.

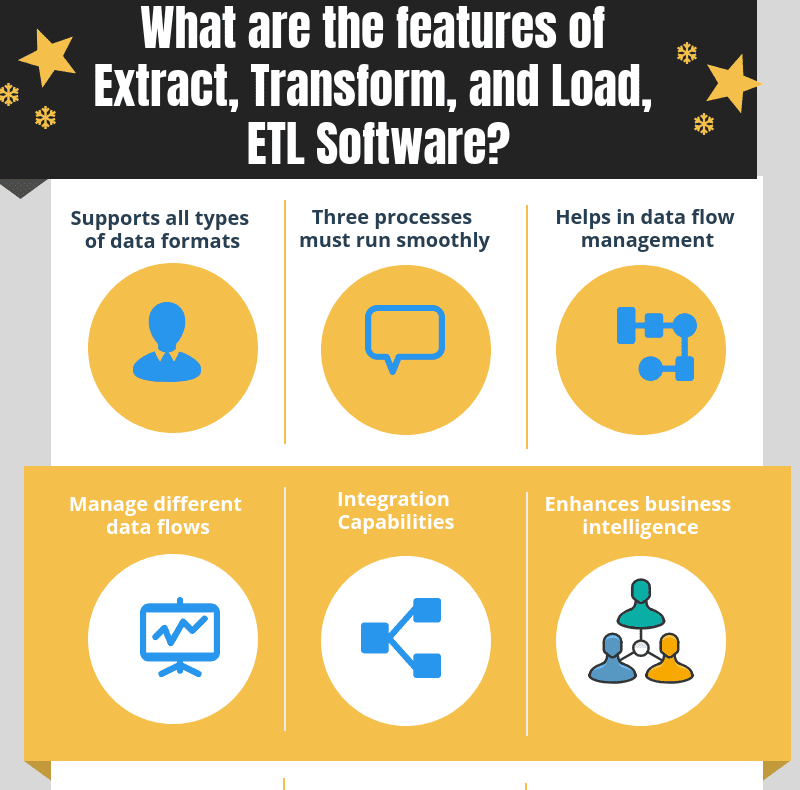

Make sure it's recorded appropriately, any changes are documented and any required steps are added to troubleshooting documents. For data typing issues you may want to handle it at the front end of the source system with additional validation, or you may want to change your ETL job to be more permissive, you may choose to map the invalid data to something valid. Sometimes there are multiple points of failure and you are better off adding extra controls/validations before earlier in the process before the most immediate cause. I like to think about points of failure instead of root causes. Then evaluate the impact of the failure(s), and the likelihood of them recurring to decide what level it should be resolved at. Once the issue is identified, move to immediately remediate the issue - meaning do the easiest thing you can to get the data available to whoever consumes it. For failure transforming the data, my first instinct is to look for a data typing issue - a string that is too long, a decimal value that should be integer, data that contains control characters that weren't handled properly, etc. If you can then remote into the source system and attempt to telnet the port (should be standard port, but we can verify in the connection string). (If not we are probably going to need to put together a manual batch of the diff and check the data, we'll do this once we confirm the problem)įor a lost connection, first verify that you can connect to the DWH. Is the job idempotent? If it is the first step is to rerun the job to see if it was an intermittent error. If it is, I still reach out to let them know we are working on it and confirm any deadlines. Is it set up to automatically notify the person/department who consumes the data? If not, I notify them and ask them if if they have any hard deadlines.I'm going to assume this is batch style ETL, some of it might be slightly different for streaming data. Having a well documented process saves you having to think to much when something breaks in the middle of the night, or even better it means somebody else can follow it and not need to contact you. If the same issue occurs I would look at implemting some code to automatically do what has been documented. When the first failure happens and is resolved, the document is updated to say what to do if this problem occurs again. If you expect bad connections during load, or failed transforms, test this in non production and write how to resolve it. To begin with the document might be quite bare and just include the business process and log file locations that can be used to investigate the issue and how to rerun failed steps (or the whole process), or saying don't rerun if later runs have succeeded. These are very specific to the business and so I don't think there is a generic answer to all of them, but working with stakeholders you should be able agree answers to them. This should include details of who to notify, how quickly it needs to be working and what steps should be taken to investigate and resolve the issue. The way I would answer is say I would follow the document that was written and agreed when the code was implemented on what to do when failures happen.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed